What Happens When the Intimacy Arrives First?

On why intimacy without trust is the real AI problem nobody is talking about

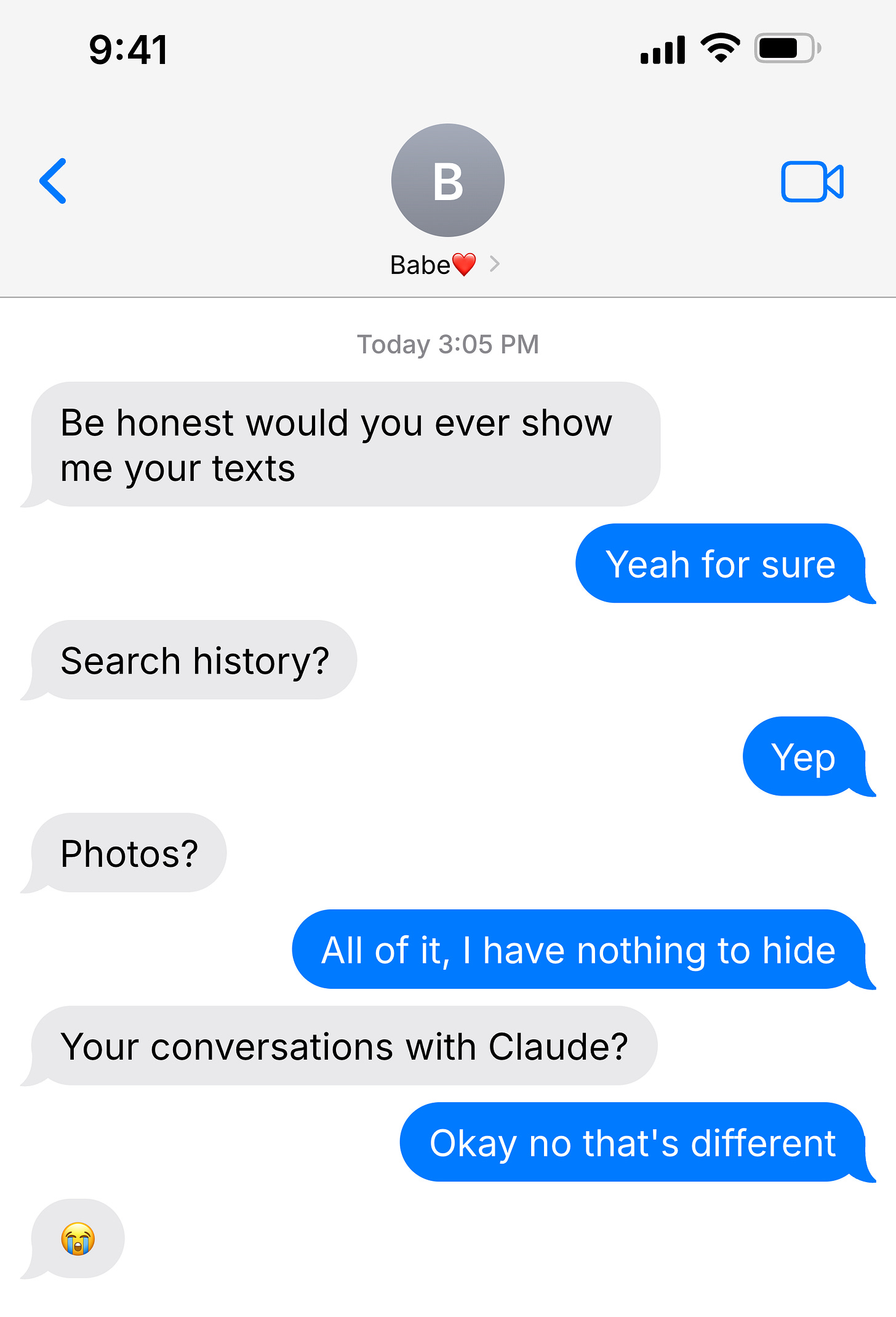

There is a meme that floats around online that goes something like this. You could show someone your texts. You could show them your search history. You could hand over your phone. But your AI conversations? Those stay private.

And there is a lot of truth in that. Because AI creates conditions for vulnerability that almost nothing else does. There is no social consequence. There is no judgment. There is no performance. And so people go deeper with AI than they would in almost any other conversation. Real problems. Real confusion. Personal matters. Things they would not bring to a friend, a coworker, or even a therapist.

AI does not ask for that vulnerability. It just creates the conditions for it.

But here is what makes that dangerous. With a person you trust, whether that is a parent, a friend, a mentor, the relationship is built over time and your guard comes down naturally. You do not ask them to cite their sources. You do not fact check them in the moment. The person is the context. With AI, the walls come down first. The intimacy arrives before the trust is earned. And that means when something comes back wrong, or even slightly off, it lands in a much more exposed place. It does not feel like a bad source. It feels like your thinking was flawed.

That is a different kind of wrong. And it is worth taking seriously.

The Ownership Gap

The real problem is not that AI might be wrong. The real problem is that AI does not show its work in a way that lets you make the answer yours. There is a difference between information that you own and information that just happened to you. Real ownership requires that you trace the reasoning, wrestle with it, and decide you believe it. When AI skips that process, it is not saving you time. It is robbing you of authorship.

And this is where truth matters. Universal truth exists. It is the foundation. AI does not get to define it or soften it. What AI can do, when it is working the way it should, is help each individual actually get there through their own reasoning, their own context, their own deliberation. The truth does not change. The path to owning it does.

AI has gotten better at citing sources. You will often see links and references attached to answers now. And that matters. But citing sources solves a credibility problem. It does not solve an ownership problem. You can footnote every answer and it still does not change the fact that you never made the choice to trust that source yourself. Accuracy and authorship are two different things. AI is getting better at the first one. The second one still needs work.

The best version of AI understands this. When a system asks you upfront what the research is for, who it will be presented to, what format you need, it is not just gathering context. It is giving you your authorship back. You declared your intent. You shaped the output before it arrived. And when the answer comes, you co-built it. You can stand behind it.

The Struggle Is the Point

This article is a good example of what I mean.

I came into this piece thinking it was about one thing. How AI shifts the burden of proof onto the individual. That was the idea. That was the entry point. But that is not what this article is about. Not really. What it is actually about is vulnerability, and ownership, and what happens to human thought when we stop pushing for the truest version of it.

That only came out because I did not take the first answer. The idea got pushed. Questions came back. I chose to answer them, to sit with them, to go one layer deeper each time. And what came out at the end is not what I walked in with. Which means it is actually mine in a way the first version never could have been.

That is what the struggle does. It does not just sharpen the idea. It transfers ownership of it into you. And that is exactly what most people are skipping when they use AI as a shortcut. They are not just getting a worse answer. They are getting an answer that will never fully belong to them, that will not retain, that will not travel, that will not land in the people they are trying to reach.

The toiling is not the obstacle. The toiling is the point.

Now Prove It Wrong

Do not accept the first answer. Do not take the summary and move on. Go at it. Prompt it harder. Declare your intent, your audience, your purpose before you even ask the question. Push back on what comes back. Ask it to show its reasoning. Keep going until the answer feels like something you built, not something that was handed to you.

Because the future of real thought, of concepts that actually expand, of ideas that actually land, depends on whether humans stay sharp or get soft. AI is not going to make that choice for you. The tool is only as deep as the person using it is willing to go.

You came in with a surface idea. Most people leave with it. The ones who don’t are the ones who keep asking the next question until they find the truest version of what they were actually trying to say.

That is not an AI skill. That is a human one. And it is worth protecting.